Securing Generative AI: How to Combat Prompt Injection Attacks

Explore how companies can protect their generative AI models against prompt injection attacks, highlighting Indrox NeuroCore’s solutions and services.

Securing Generative AI: Strategies for Combating Prompt Injection Attacks

Introduction

In the age of software development and process automation, generative artificial intelligence is radically transforming sectors such as mobile app development and the creation of AI agents. However, this technological revolution also brings significant challenges, especially when it comes to security.

What Is a Prompt Injection Attack?

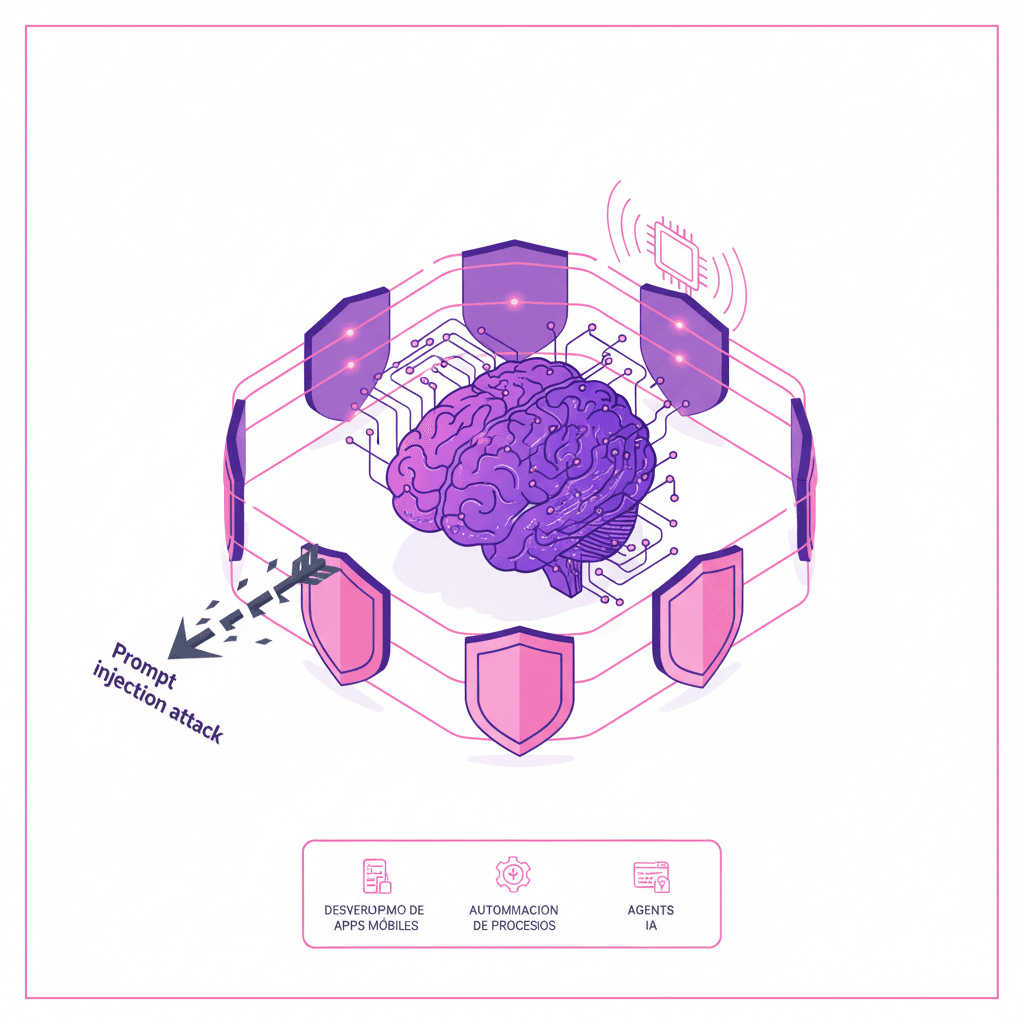

A prompt injection attack occurs when malicious inputs are fed into generative AI systems, which can disrupt their operations and alter their outputs. This type of attack is particularly dangerous because it can lead to the disclosure of sensitive data or even allow external control of critical systems. In the context of software development and the creation of AI agents, understanding and mitigating these risks is crucial.

Implementing AI Security Solutions

To strengthen security in mobile app development and other AI fields, it is essential to adopt robust security strategies. The Indrox NeuroCore suite excels in this area, offering integration of customized security measures that are vital for protecting AI systems. This technology enables effective monitoring and rigorous control of interactions with AI models, helping to prevent malicious manipulation.

Use Cases and Practical Examples

By examining real-world cases, we can see how various companies have successfully implemented security strategies in their software development and AI agent creation processes to combat prompt injection attacks. The adaptability and advanced capabilities of Indrox NeuroCore have played a crucial role in strengthening security infrastructure across a variety of organizations, protecting their developments in the field of process automation and beyond.

Conclusion

This article has explored the risks associated with prompt injection attacks and how effective solutions can be implemented to protect generative AI in fields such as software development and process automation. Indrox NeuroCore emerges as an essential tool for ensuring that AI implementations are not only innovative but also secure. If you are interested in strengthening the security of your AI systems, we invite you to contact us for a free consultation.

Indrox

Indrox technology team. Experts in custom software, applied artificial intelligence and digital transformation for companies in Peru and Latin America.

Published on April 25, 2026